Lights, Animation, Interaction! (Society for Animation Studies 34th Annual Conference)

I've attended and given talks at the Society for Animation Studies annual conference three years in a row!

For the past two years, I discussed how "anime" is made and how we (computer scientists) can support the process. These are the fruitful outcome of the collaboration at Arch Research, the R&D division of the Japanese animation production company Arch Inc. The slides are available at the Arch Research website.

Considering my experience in the primary affiliation, AIST TextAlive Project, helping live musical performances on the physical/virtual stages, I switched the topic this year: how do the "animating lights" contribute to the stage performances? The slides are already available online.

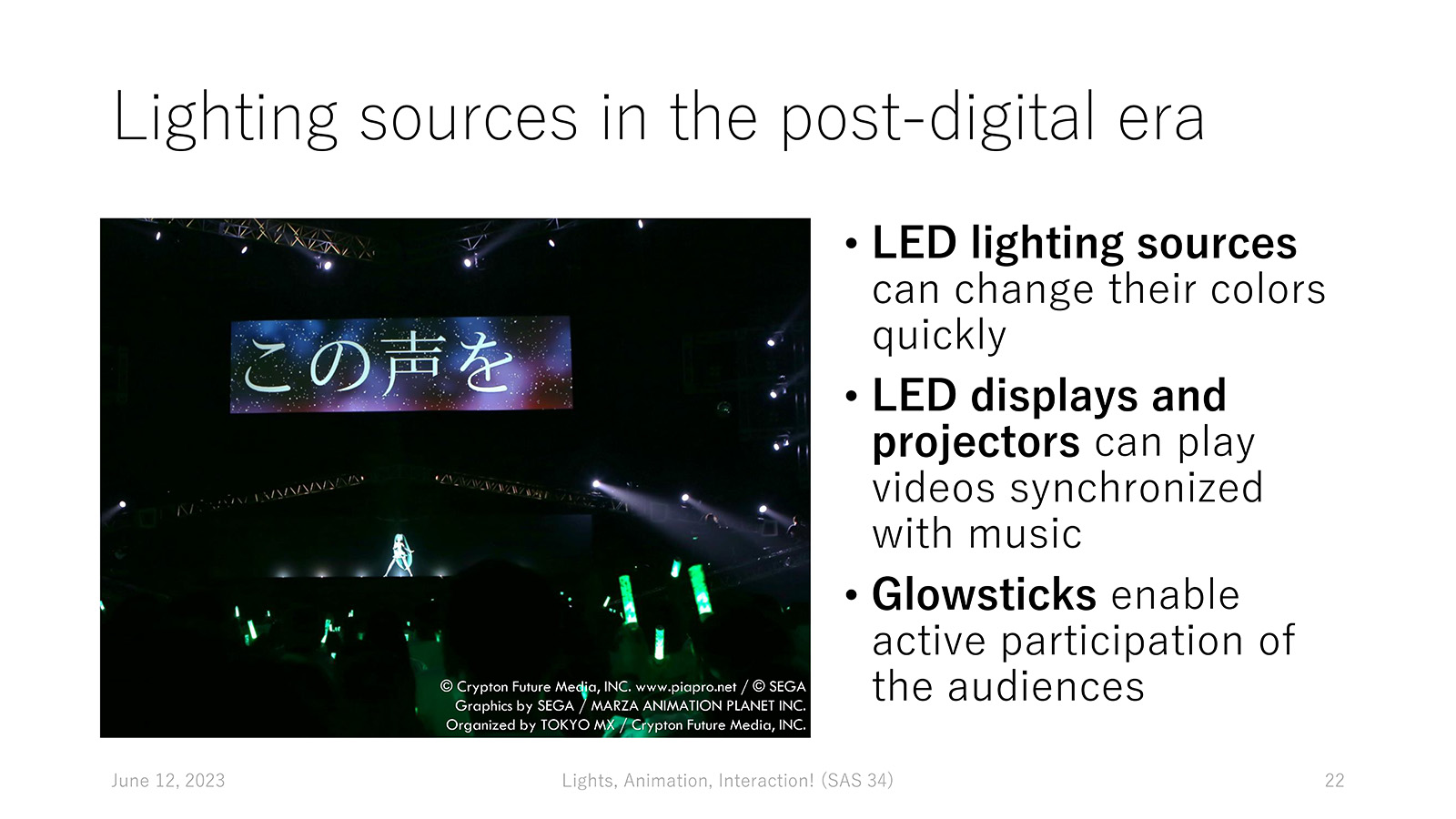

It all started with my feeling when helping stage directors author lyric videos projected onto the LED screens — they seemed to prefer simple animation effects over complex effects with much information. It was as if the directors were considering videos as yet another kind of "stage lighting," just like beams of light emitted from conventional lighting sources.

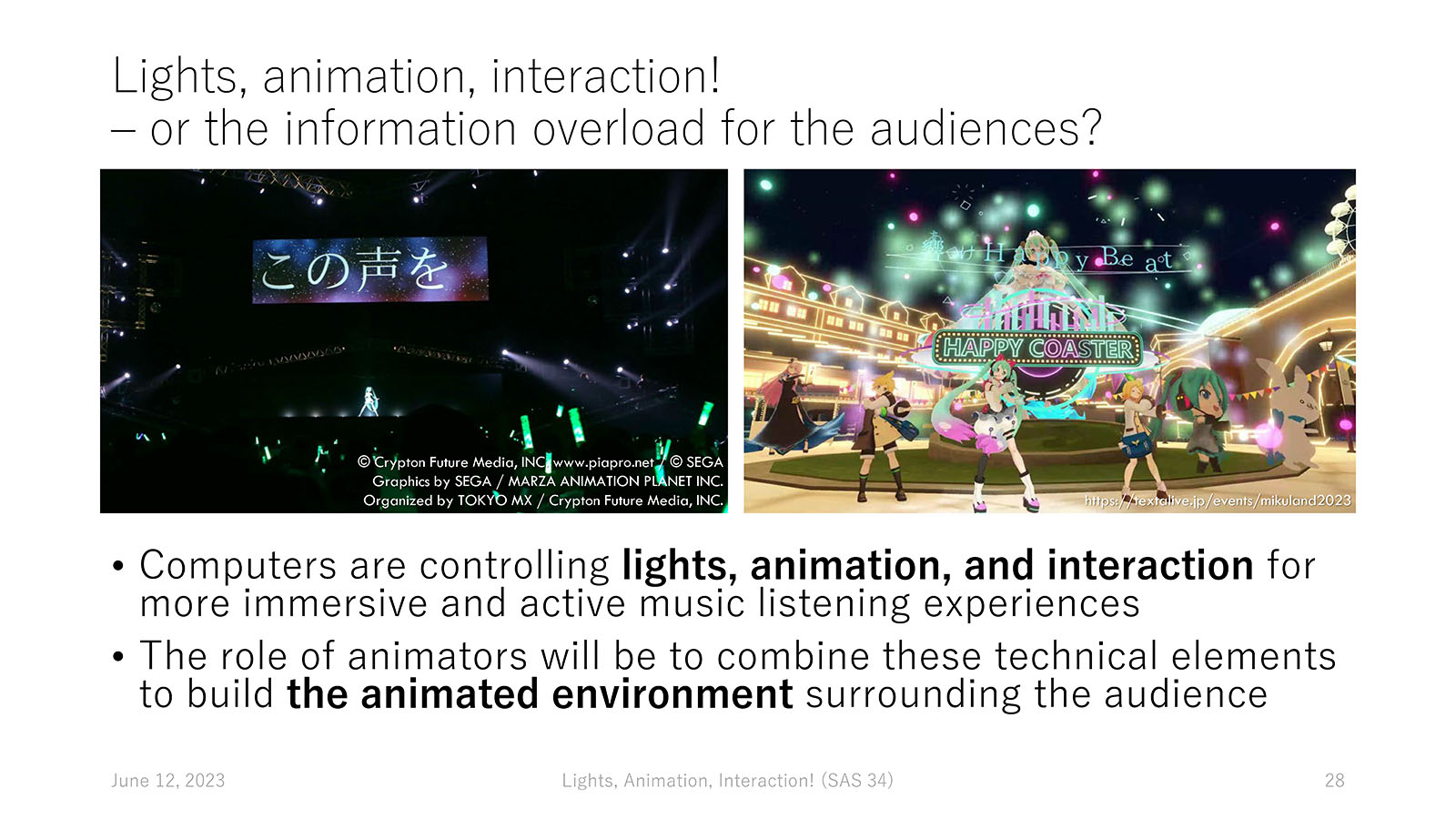

In my talk, I revealed the rich design space of "animating lights" for live music performances with concrete examples of lighting mechanisms, the brief history of related technologies enabling such an "animated environment," and the potential information overload caused by the computer-controlled lighting. The role of "animators" in this context is to combine various technical elements to design an immersive and active music listening experience for the audience with an appropriate amount of information.

While I've enjoyed my third attendance at the SAS conference, my only sadness resides in all three times being online. I think the talk went well — I got an email from the audience who listened to my talk onsite and got interested in the topic.

Top page

Top page